University-wide Assessment Instruments

Institutional Research & Effectiveness administers university-level assessments, including national surveys and standardized tests. The staff in Institutional Effectiveness are happy to help each academic program and academic support unit identify their target populations in the results of these instruments and provide guidance on how to incorporate these results into the annual IE report process.

Assessment Instruments & Results

The Office of Institutional Effectiveness responsibilities include tasks pertaining to both assessment and institutional effectiveness. OIE conducts and facilitates assessment testing campus-wide. Assessment results are shared with the faculty, appropriate administrators, and other relevant offices and are used to enhance student learning and strategic planning.

Student Exit Survey Results

Students are asked to complete the exit surveys at the same time that they apply for graduation. Survey results are compiled by semester and then rolled up into one academic year. The following results provide information at the University level, and each academic program receives a report of its graduates during the year. Many of the academic programs use these results in their annual IE reports.

Student Course Surveys

The course surveys are deployed through SmartEvals software at the end of every semester. The transition from ClassClimate to SmartEvals began with a pilot in 2024 Maymester and will continue through Summer 2024 courses, with full implementation scheduled for Fall 2024. More Info.

In October 2021, Faculty Senate approved a new set of questions for a revised course survey. The overall results for each semester will be provided at this location going forward:

| Fall Overall Results | Spring Overall Results |

|---|---|

For the first time in Spring of 2022, faculty were given the opportunity to add up to three

questions to their student course surveys. In Fall of 2023, faculty were given the option to

submit additional questions to the question bank. The question bank has been broken into five

categories: Instructional Design, Instructional Delivery, Instructional Assessment, Course

Impact, and Instructor Submitted. To preview the question options, use the five drop downs

below. Faculty will have options to add questions to each course section they teach. The

additional questions can be different for each course. Faculty will be sent a link to add the

three optional questions via SmartEvals (see the instructions on how to add the optional

questions to your course surveys).

Optional Question

Instructions.

| Question Text | |

| The course objectives were well explained. | |

| The course assignments were related to the course objectives. | |

| The instructor related the course material to my previous learning experiences. | |

| The instructor incorporated current material into the course. | |

| The instructor made me aware of the current problems in the field. | |

| The instructor gave useful writing assignments. | |

| The instructor adapted the course to a reasonable level of comprehension. | |

| The instructor exposed students to diverse approaches to problem solutions. | |

| The instructor provided a sufficient variety of topics. | |

| Prerequisites in addition to those stated in the catalog are necessary for understanding the material in this course. | |

| I found the coverage of topics in the assigned readings too difficult. |

| Question Text | |

| The instructor gave clear explanations to clarify concepts. | |

| The instructor's lectures broadened my knowledge of the area beyond the information presented in the readings. | |

| The instructor demonstrated how the course material was worthwhile. | |

| The instructor's use of examples helped to get points across in class. | |

| The instructor's use of personal experiences helped to get points across in class. | |

| The instructor encouraged independent thought. | |

| The instructor encouraged discussion of a topic. | |

| The instructor stimulated class discussion. | |

| The instructor adequately helped me prepare for exams. | |

| The instructor was concerned with whether or not the students learned the material. | |

| The instructor developed a good rapport with me. | |

| The instructor recognized individual differences in students' abilities. | |

| A warm atmosphere was maintained in this class. | |

| The instructor helped students to feel free to ask questions. | |

| The instructor demonstrated sensitivity to students' needs. | |

| The instructor tells students when they have done particularly well. | |

| The instructor used student questions as a source of discovering points of confusion. | |

| The instructor's vocabulary made understanding of the material difficult. | |

| The instructor demonstrated role model qualities that were of use to me. | |

| The instructor's presentations were thought provoking. | |

| The instructor's classroom sessions stimulated my interest in the subject. | |

| Within the time limitations, the instructor covered the course content in sufficient depth. | |

| The instructor attempted to cover too much material. | |

| The instructor moved to new topics before students understood the previous topic. | |

| The instructor encouraged out-of-class consultations. | |

| The instructor carefully explained difficult concepts, methods, and subject matter. | |

| The instructor used a variety of teaching approaches to meet the needs of all students. | |

| The instructor found ways to help students answer their own questions. | |

| The instructor asked students to share ideas and experiences with others whose backgrounds and viewpoints differ from their own. |

| Question Text | |

| The exams were worded clearly. | |

| Examinations were given often enough to give the instructor a comprehensive picture of my understanding of the course material. | |

| The exams concentrated on the important aspects of the course. | |

| I do not feel that my grades reflected how much I have learned. | |

| The grades reflected an accurate assessment of my knowledge. | |

| The instructor was readily available for consultation with students. | |

| The criteria for good performance on the assignments or assessments were clearly communicated. |

| Question Text | |

| This course challenged me intellectually. | |

| The course helped me become a more creative thinker. | |

| I developed greater awareness of societal problems. | |

| I felt free to ask for extra help from the instructor. | |

| I learned to apply principles from this course to other situations. | |

| This course challenged me to think critically and communicate clearly about the subject. | |

| The community engaged learning component of this course enhanced my understanding of course content. |

| Question Text | |

| Each unit had ample time devoted to it in class. | |

| I feel previous courses prepared me for this course. | |

| This course helped prepare me for my career and future plans following graduation. | |

| The Canvas website for this course was organized well. | |

| My knowledge gained from the course pre-requisite(s) was important to my success in this class. | |

| I felt that the instructor was generally enthusiastic about the subject matter of this course. | |

| I felt that the instructor generally made class interesting. | |

| Overall, I felt that the required outside readings were valuable. | |

| Overall, I felt that the instructor cared about the students. | |

| This course challenged you to meaningfully reflect, question, evaluate, and potentially adjust your current decision-making process. | |

| The assessment methods (homework, exams, presentations, papers, etc.) used in this course sufficiently allowed me to demonstrate my learning to the instructor. | |

| I learned at least three things from this course that I will remember for the rest of my life. | |

| Because of this course, I now have a better understanding of how economists think than I did before taking this course. | |

| I believe the instructor cares about student learning. | |

| I believe the instructor cares about general student well-being. | |

| It was easy to see how this content can be applied when I graduate. | |

| This course improved my knowledge of graphic design. | |

| In this course, I was encouraged to ask questions and participate in class discussion via in-person and/or virtual methods. | |

| The weekly out-of-class help sessions provided by the TA's were helpful to me in understanding course material and completing assignments. | |

| This class is beneficial within my program of study. |

ETS Proficiency Profile

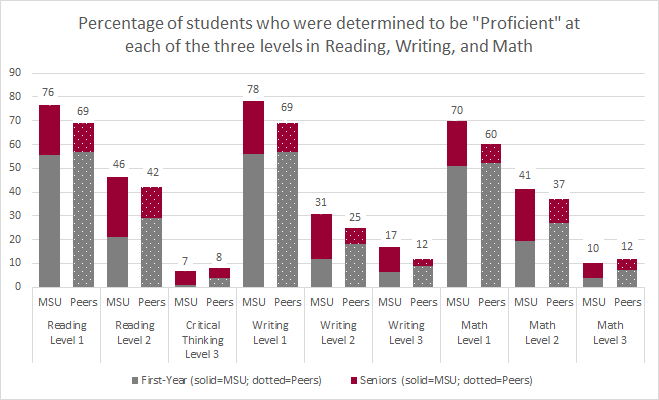

Members of the Office of Institutional Research and Effectiveness administer this test to first-year students in the fall and senior students in the spring, representing all colleges at the university. This test evaluates four core skill areas: reading, writing, critical thinking, and mathematics, along with context-based areas of humanities, social sciences, and natural sciences. MSU’s results are also benchmarked against our Carnegie peers. Individual ETS reports share results for several academic programs that use these data in their annual IE reports. Furthermore, the university-level results are used as part of the University’s General Education program.

The ETS Proficiency Profile scores first-year and senior students based on their proficiency in reading and critical thinking, writing, and mathematics across three levels of competency (Level 3 being the most difficult level). For the purposes of MSU's assessment, all seniors, including those who have transferred in coursework, are able to complete the exam; however, only those seniors who have not transferred coursework are used as our comparison with first-year students. Each year, MSU evaluates what percentage of our first-year and non-transfer seniors students are proficient in reading, writing, and mathematics. These percentages can be compared to our Carnegie peers in the R1 and R2 classifications. The table below provides the average proficiency of MSU students compared to Carnegie peers from 2012-2017. As is evidenced in these data, MSU's first-year students start at a level below our Carnegie peers; however, MSU seniors surpass our Carnegie peers in all areas except for Critical Thinking and Level 3 Mathematics.

NSSE (National Survey of Student Engagement)

The NSSE measures students’ engagement with coursework and studies and how the university motivates students to participate in activities that enhance student learning. This survey is used to identify practices that institutions can adopt or reform to improve the learning environment for students. Each year, the Office of Institutional Research and Effectiveness deploys the online survey to freshmen and seniors. The institution then compares its results to a group of peers from the same Carnegie classification, from a peer group determined by the NSSE examiners, and from a group of peers that MSU has identified. Individual reports are created for units that use the results for their annual IE reports. Certain questions are utilized in several university-wide assessment activities, such as the Maroon & Write Quality Enhancement Plan and the General Education Assessment Plan.- 2023 NSSE Snapshot

- 2021 NSSE Snapshot

- 2020 NSSE Snapshot

- 2019 NSSE Snapshot

- 2018 NSSE Snapshot

- 2016 NSSE Snapshot

- 2014 NSSE Executive Summary

- 2013 NSSE Executive Summary

- 2012 NSSE Executive Summary

- 2011 NSSE Executive Summary

- 2010 NSSE Executive Summary (includes 2008)

FSSE (Faculty Survey of Student Engagement)

- 2014 FSSE Executive Summary

- 2013 FSSE Executive Summary

- 2012 FSSE Executive Summary

- 2011 FSSE Executive Summary

Last Updated: November 8, 2024